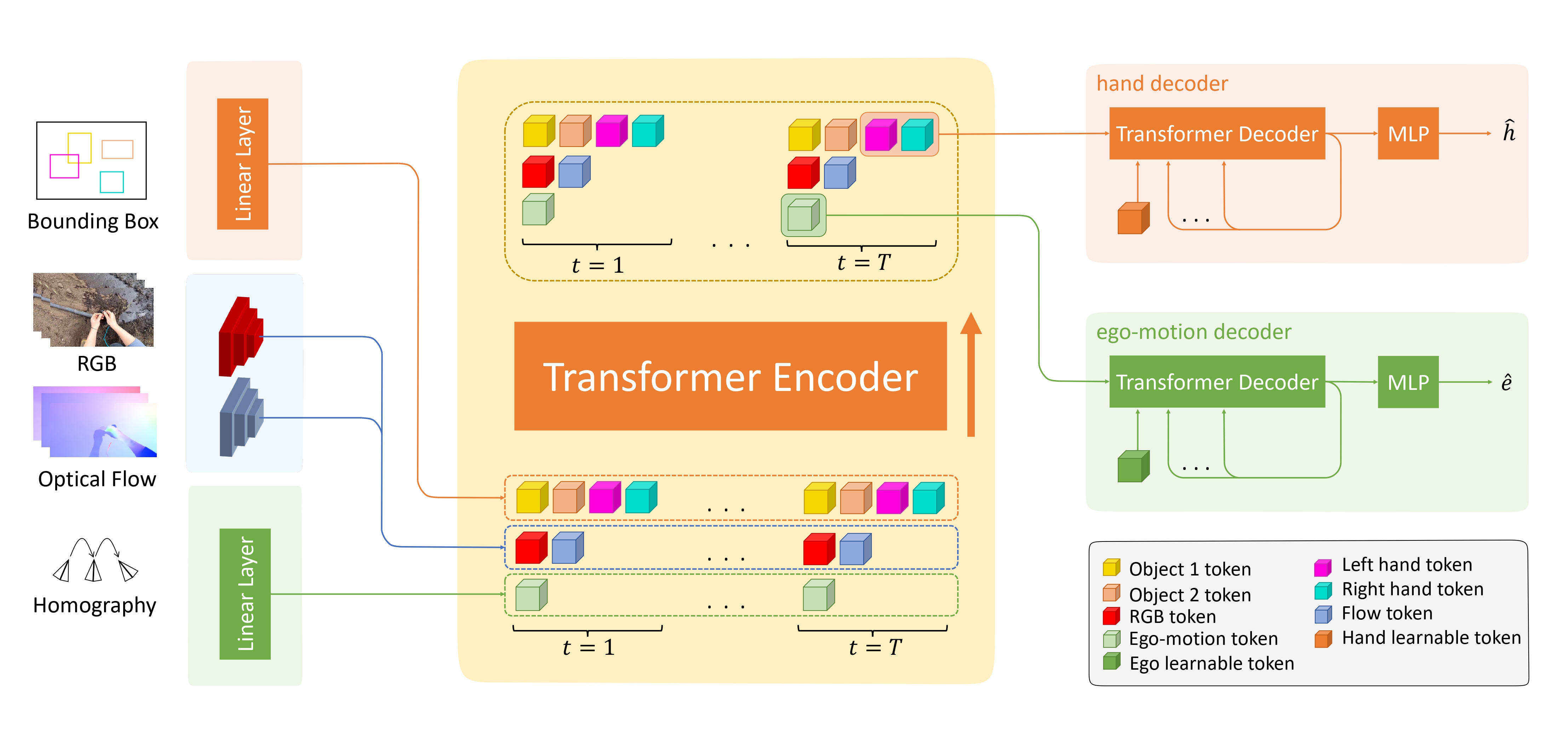

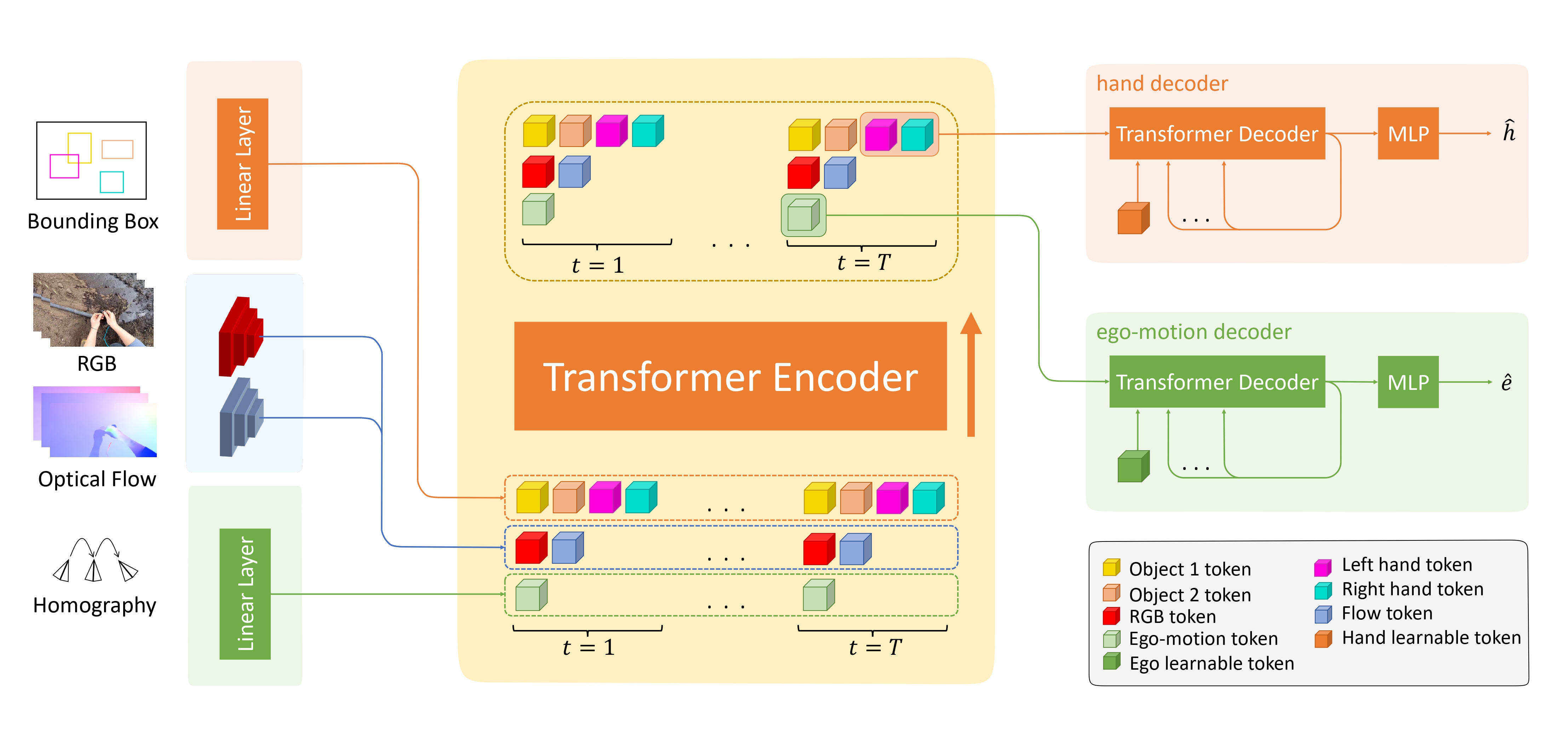

Approach

Predicting future human behavior from egocentric videos is a challenging but critical task for human intention understanding. Existing methods for forecasting 2D hand positions rely on visual representations and mainly focus on hand-object interactions. In this paper, we investigate the hand forecasting task and tackle two significant issues that persist in the existing methods: (1) 2D hand positions in future frames are severely affected by ego-motions in egocentric videos; (2) prediction based on visual information tends to overfit to background or scene textures, posing a challenge for generalization on novel scenes or human behaviors. To solve the aforementioned problems, we propose EMAG, an ego-motion-aware and generalizable 2D hand forecasting method. In response to the first problem, we propose a method that considers ego-motion, represented by a sequence of homography matrices of two consecutive frames. We further leverage modalities such as optical flow, trajectories of hands and interacting objects, and ego-motions, thereby alleviating the second issue. Extensive experiments on two large-scale egocentric video datasets, Ego4D and EPIC-Kitchens 55, verify the effectiveness of the proposed method. In particular, our model outperforms prior methods by 1.7% and 7.0% on intra and cross-dataset evaluations, respectively. The code and preprocessed data will be made available.

@inproceedings{Hatano2024EMAG,

author = {Hatano, Masashi and Hachiuma, Ryo and Saito, Hideo},

title = {EMAG: Ego-motion Aware and Generalizable 2D Hand Forecasting from Egocentric Videos},

booktitle = {European Conference on Computer Vision Workshops (ECCVW)},

year = {2024},

}